Blog

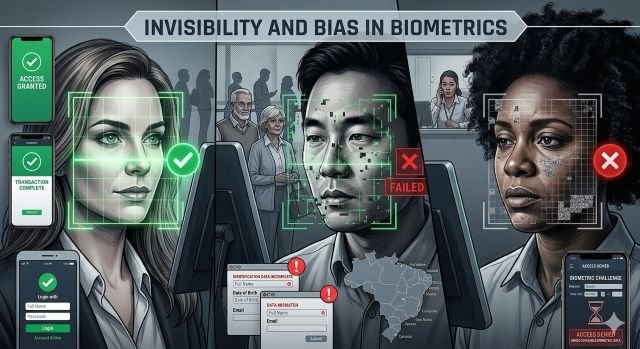

The Invisible Friction of Biometrics: How Black, Asian and Other Groups Face More Errors – and Why Brazil Still Ignores the Problem

The Invisible Friction of Biometrics: How Black, Asian and Other Groups Face More Errors – and Why Brazil Still Ignores the Problem

By Henrique S.G. Costa (link para perfil no LinkedIn) e Robinson Lamas (link para o perfil no LinkedIn)

Introduction

At a digital identity event, I asked a director at a large marketplace whether facial recognition solutions affected people of Afro-descendant ethnicities. After an awkward silence, the answer was: “we don’t see problems.” It was an easy — and possibly wrong — response. The problem exists; what’s missing is measurement. Biometrics already form part of the digital journey for millions of Brazilians, but not everyone is recognized in the same way.

The presence of biometrics in everyday life

Digital banks, carriers, ride‑hailing apps, e‑commerce platforms and public services rely on facial or fingerprint recognition to validate identities. The promise is security and speed. In practice, however, the technology does not work equally for everyone: historically underrepresented groups — Afro‑descendants, Asians and other ethnic minorities — face much higher error rates, with consequences ranging from frustration to exclusion and unjust suspicion of fraud.

Numbers that reveal inequality

International studies document significant biases in facial recognition algorithms. Independent tests have shown striking discrepancies: much higher false‑positive rates for darker‑skinned people and for Asians.

The National Institute of Standards and Technology (NIST) report, one of the most comprehensive in the world, analyzed 189 facial recognition algorithms and found marked discrepancies:

- False‑positive rates up to 100 times higher for Afro‑descendants and Asians compared with white people.

- In some algorithms, Afro‑descendant women had identification error rates above 30%.

- Commercial systems widely used showed errors between 10% and 35% for faces of darker‑skinned people.

These figures are not abstractions: they translate into blocked accounts, abandoned registrations and endless verification processes.

Cases and reports in Brazil

Although there are no official ethnicity‑segmented data in the country, platforms like Reclame Aqui accumulate hundreds of complaints about facial recognition failures at digital banks, fintechs and carriers. Patterns repeat: “the system doesn’t recognize my face,” “my account was blocked for no reason,” “I had to try many times,” “I only solved it with human support.” Social media reports illustrate the impact: customers who had to send multiple videos to prove their identity; ride‑hail drivers suspended for “improper use” when, in fact, the system failed to recognize their face.

The invisible cost for companies and customers

Each failure creates friction in the customer journey, increases service time and raises operational costs. Companies report that up to 20% of registration abandonments may be linked to identity verification problems — a direct loss of revenue. In the financial sector, wrongful blocks reduce app usage and affect engagement. On the reputational front, consumers who face repeated failures share their negative experiences, amplifying the perception that the company “doesn’t work for me.”

Why Brazil still ignores it

Part of the problem is technological governance: no major Brazilian company publishes metrics segmented by ethnicity, gender or age group; there are no public audits nor regulation that require such monitoring. Without data, each case becomes an isolated incident instead of a symptom of a structural problem. Meanwhile, the impact remains invisible to managers and painfully visible to those who live the experience.

The business perspective

Here’s a point few are connecting: this is not just inclusion, it’s business.

Each failure generates:

- Registration abandonment;

- Higher operational costs;

- Lower conversion;

- Pressure on customer support;

- And the most dangerous: loss of trust.

Because the customer doesn’t think, “the algorithm failed,” they think, “this company doesn’t work for me.”

If you work in CX, product or technology, try a quick test.

Does your company measure:

- Failure rate by customer profile?

- Abandonment at the validation step?

- How many customers are escalated to human support?

If the answer is “no,” you may be losing customers without knowing why, because although biometrics were created to recognize people, today they often determine who is recognized and who is not.

Paths to inclusive biometrics

To turn the technological promise into fair practice, concrete measures are needed:

- Measure performance by demographic group.

- Audit algorithms regularly by independent entities.

- Require diversity in training datasets.

- Offer alternative authentication methods that are equally secure.

- Communicate procedures and failures to users with transparency.

Technology is only inclusive when it works for everyone, and until its performance is measured it will remain invisible to companies and painfully visible to those who experience it every day, so offering alternative paths prevents specific groups from being systematically penalized.